<- Introduction – Chapter 2 ->

- 1.1 Introduction

- 1.2 The Urbanised Landscape: Constructions of the Urban as Anti-Child

- 1.3 Safeguarding, Crime, and Children: Challenging Traditional Expertise

- 1.4 Technology: Exploring an Unknown Environment

- 1.5 Conclusion

- References

1.1 Introduction

This chapter looks at how expert discourse both in policymaking and academic circles sought to understand and shape children’s lived experiences of place and play in Britain leading up to and during the 1980s, 1990s, and 2000s, bringing different categories of discourse into dialogue. The framework of rules that experts built up attempted to define where children could be and what they could do there, as well as the physical makeup of those places and parental and societal attitudes towards them. Here this chapter will apply du Gay et al.’s analytical framework of the ‘circuit of culture’ which identifies five key aspects to understand when analysing cultural texts: representation, identity, production, consumption, and regulation.1 In particular, Mora et al.’s connection of the circuit to the study of material culture allows this chapter to examine experts’ changing approaches to childhood environments as cultural artefacts to be understood through the circuit’s five key aspects.2 This methodology reveals how the changing attitudes of experts in planning and policymaking circles during the 1980s, 1990s, and 2000s translated into material changes in the character and policing of the environments of British childhoods. Evolving from the ‘child centred’ pedagogy of the post-war era, the late 20th century saw the continued development of discourses that conceived of ‘a universalist model of childhood vulnerability, characterised around an ageless, classless, genderless “child”’.3 At the same time public discourse (discussed in Chapter Two) was beginning to challenge traditional sources of expertise and forward child-led experiential methods, which sometimes were incorporated and sometimes rejected by planners and policymakers. Many academics during this period – in conversation with policymakers and planners but also apart – were also in tension with these traditional sources, forwarding an alternative vision of childhood that was less safety-conscious and more freedom-conscious.

During the last three decades of the twentieth century in Britain there were three main expert discourses surrounding children and their environments, each concerned with a factor that was perceived to threaten or degrade those environments. As will also become evident in Chapter Two’s assessment of the impact of media discourse, each of these points of concern reflected the moral panics that were coming to surround constructions of childhood. The first threat was the urbanisation of the landscape, more specifically the increasing dominance of cars and roads. The second was fear over dangerous ‘strangers’ on the streets, and also dangerous ‘youth’ from the 1990s onwards. The third was technologies like the TV, games console, mobile phone, and internet. All three subjects captured the attention of experts, but professional opinion was divided. Whilst almost all experts agreed on what these ‘new’ threats to childhood constituted, policymakers and academics were quickly split on proposed solutions. In general, policymakers approached problems from a health-and-safety perspective that led to greater regulation of children’s independence and mobility in response to these threats, as can be seen in the reports, white papers, speeches, and bills produced by the government and civil service during this period. Conversely, academics addressed the same problems from a freedom-and-agency perspective, consistently arguing for less regulation of children and more regulation toward creating child-friendly public spaces whilst also studying the impacts (health and otherwise) of changing policy.

These competing views interacted during a period when public as well as expert attitudes towards the two realms of the ‘outdoors’ and ‘indoors’ were changing. The threats of urbanism and strangers were said to be pushing children away from the outdoors but it also appeared that technology that was pulling them in. The indoor environment was predictable and safe, yet it was also unhealthy and lazy. The outdoors was unpredictable and dangerous, yet also active and exploratory. A set of social stereotypes was perpetuated and accompanied these representations: indoors was for girls, outdoors for boys. Indoors for young, outdoors for old. Furthermore, because these ideas were about the environment, they were contingent not only on a family’s personal wealth and social class, but that of the community they lived in.

Chapters Three and Four of this thesis will look at the attitudes and actions of children themselves, those most directly affected by the policy and cultural outcomes of this expert discourse, in two specific North East communities. The subject that most impacted them, I argue, was the one that most drastically altered the physical environment: the expansion of urban, car-based, landscapes. This had a very significant impact on where it was deemed appropriate for children to be, and experts played a fundamental role in both implementing and condemning these changes.

1.2 The Urbanised Landscape: Constructions of the Urban as Anti-Child

1.2.1 Driven Out; Driven In: The Rise of the Car

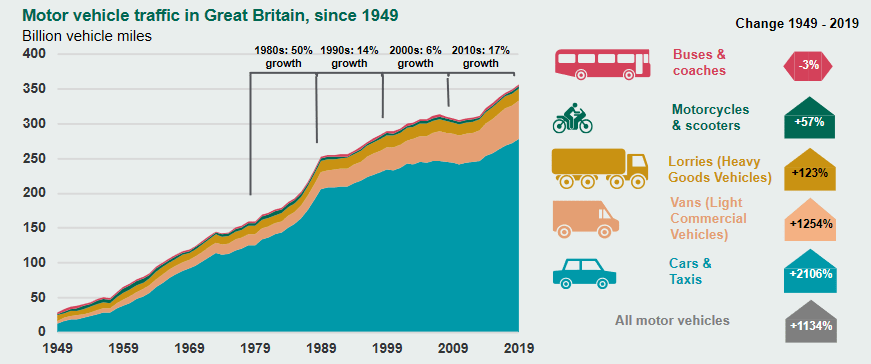

Following the post-war baby boom, the volume of vehicles on Britain’s roads increased as dramatically as did its population. The Preston Bypass, Britain’s first motorway, opened in 1958, and in 1963 the Ministry of Transport’s Traffic in Towns report forecast that the car would soon be taken ‘as much for granted as an overcoat’, even though 70% of households did not yet own one.4 Michael Dower’s 1965 ‘Fourth Wave’ report was also influential on experts in the field, tying the idea of a new ‘leisure oriented existence’ for the British citizenry to car-oriented infrastructure.5 The car was seen not only as inevitable but essential to a modern economy, and in the post-war decades rates of car ownership increased to the point that 1981 marked the first time that more British households owned a car than did not.6 Furthermore, the 1980s itself saw a 50% increase in the volume of vehicles on Britain’s roads, more than had been seen in any decade prior, or has occurred in any decade since.7 In 2011, the Department for Transport (DfT) estimated that whereas there were approximately 12 million vehicles in Britain in 1970, in 2010 there were 34 million, the 1980s being the decade that provided the largest increase.8

In government, this expansion was embraced with a pro-road agenda to serve what Margaret Thatcher called ‘the great car economy’.9 The 1989 Roads for Prosperity white paper, and lesser-known follow-up Trunk Roads, England into the 1990s detailed the plan to embark upon what the government touted as ‘the biggest road-building programme since the Romans’ based upon a predicted 142% increase in traffic by 2025.10 The 500 road schemes proposed were to cost £23bn, and as a result of the government’s enthusiasm and funding, 24,000 miles of new road was built between 1985-1995.11 However, during the 1990s it became increasingly clear that the 142% increase prediction that the Roads for Prosperity programme was built upon was wildly overestimated, the real figure re-estimated to be closer to just 40%, and as such John Major’s Conservative government cut spending on roads significantly during its last years in power.12 Furthermore, the road-building agenda had sparked a significant number of protest groups around the country against works in their local areas, alongside national protest organisations like Alarm UK!, formed in 1991 in direct response to Roads for Prosperity.13 The construction of the M3 through Twyford Down in 1992 catalysed popular support for protests against road building, as the site had been the ‘most protected landscape in southern England’ before the incident, having contained two Sites of Special Scientific Interest, two scheduled ancient monuments, seven rare species, and a designated Area of Outstanding Natural Beauty.14 The European Union issued a complaint and several organisations protested at the site including Friends of the Earth, Alarm UK!, EarthFirst! and the Dongas Tribe, a group of ‘new age’ travellers local to the area, who garnered public support in particular after featuring on Channel 4’s Dispatches programme.15 Further road projects that received significant opposition were Newbury road (1994), M77 Glasgow (1994), and the M11 link-road (1995); in the North-East, Newcastle saw one of the earliest protests when the Flowerpot Tribe occupied trees in Jesmond Dene in 1993 to protest the construction of the Cradlewell Bypass.16

Due to this local and national protest, and to the forecasted explosion in traffic failing to materialise, by 1996 most of the road schemes had been cut. After coming to power in 1997 Labour cut down further, from an initial 500 schemes to 37, with John Prescott promising ‘many more people using public transport and far fewer journeys by car’.17 Rates of increase in car ownership and traffic slowed, and between 1996 and 2006 the road network expanded in total by just 1.6%.18 However, whilst being more gradual, increases over the 1990s and 2000s still led to the point where 2006 was the first year that more households owned 2 cars than none, and indeed Labour embarked on its own programme of road construction following its 2000 10-year transport plan, with £59bn earmarked for new roads.19 In Chapters Three and Four, we will see how this car-oriented governance impacted childhoods even in working class North East neighbourhoods where rates of car ownership fell far below the national average (whereas most British households owned a car by 1981, Newcastle and Gateshead only reached this level in the mid-2000s).20

Figure I. A DfT graph estimating the rise of traffic in Britain over 70 years, 2019.21

The motorisation of the British landscape, driven by the policies of planners and experts in government, saw parents grow more wary of letting their children out to play or walk to school or other activities. The term ‘helicopter parent’ had been coined in 1969, but it became commonplace in Britain and America in the late 1980s as more and more parents ferried their children by car.22 As will be explored later in this chapter, much was made of this rise in the driven-child by academics across the period such as with Mead’s ‘Neighbourhoods and human needs’ (1984), Bartlett’s Cities for Children (1999), and Frost’s A History of Children’s Play (2010).23 These contributions developed existing anxieties about road safety, particularly in working-class neighbourhoods which more often had busy roads running through them and relatively little access to alternative outdoor spaces such as parks.24 These developments raised serious concerns about the health and safety of children, and expert discourse began to focus on what could be done to keep children safe. Experts presented a range of views, but generally opinion fell into two camps: The first view, which was the predominant concern expressed by both Conservative and Labour governments, was to accept cars as necessary and instead focus on what children and parents could do to protect themselves from road injury. The second view, promoted by the freedom-and-agency school of academic commentators such as Hillman and Adams (and many others who wrote for the Children’s Environments journal) was that cars were not so necessary, and that work should be undertaken to reduce traffic to make streets safer for children.25

The first view had been held by governments since the 1960s and expressed itself most publicly through road safety advertising campaigns. These campaigns were usually aimed at children or parents, and commonly featured children as road victims: one of the earliest road Public Information Films (PIFs) was Batman’s Kerb Drill in 1963.26 The 1980 Mark PIF unequivocally told parents to ‘Make sure the under fives stay inside’ and 1987’s Funeral Blues used footage of a real funeral, showing a class of children mourning their dead friend.27 Many campaigns also centred on the danger caused by drink-driving, such as 1983’s Fancy a Jar? Forget the Car and 1995’s One More, Dave. These PIFs placed the onus on drivers to be responsible on the roads rather than parents or children, but by focussing on alcohol they ignored the 75-90% of road fatalities that did not involve drink drivers in the 1980s, 90s, and 2000s.28 More to the point, these films clearly evidence that their creators did not consider road infrastructure itself to be an issue, only the people using it. The 1990s Kathy Can’t Sleep and 2000s Hedgehog Family PIFs – among many others – condemned bad drivers, but still framed the dangers of the road as inherent and thus emphasised the responsibility of the pedestrian to be safe.29 Alongside improvements to the safety features of cars this messaging was effective as child injuries and deaths on roads did decrease during this period despite increased road traffic. Indeed, in the long run the death rate of child-per-vehicle in Britain fell consistently from 1922 to 1992 by over 98%, from c.80 deaths per 100,000 vehicles to c.2.30 In 2000 THINK! was established as a specific government road-safety campaign, and they reported in 2010 that they had reduced road deaths in the decade by a further 46%.31 This did not mean the roads were environmentally safer, of course – although some residential areas did have 20mph speed limits introduced from 1991 onwards – it meant that expectations and behaviours surrounding roads had changed with the rise of a more safety conscious mindset.32

The expansion of the road network and the emphasis placed on pedestrian responsibility logically led policymakers and urban planners during the 1980s to the idea of segregating road and footpath networks. If cars and pedestrians never came into contact, both would be safer. Since the beginning of the century the car had already been slowly changing what people saw the ‘street’ as being for, as children’s play came to be understood as being in conflict with its function as a transport corridor.33 From the 1960s onwards, guard rails and ‘cattle pen’ road islands became popular in road design as they allowed speed limits to be raised whilst ostensibly keeping pedestrians safe.34 However as Ishaque and Noland point out, this not only cut people off from using streets, but there is also ‘no conclusive research evidence’ on whether guard rails made pedestrians safer overall.35 This is because they irritated people into crossing at unmarked crossing points, allowed for increased speed limits, and gave drivers a false sense of separation, since they were generally not strong enough to stop a speeding car in the event of a collision.36 The remnants of Newcastle council’s plans in the 1960s and 1970s to fully separate people and roads via a system of skywalks can still be seen today, an emblematic relic of a vision that sought to separate people from the streets, and demonstrative of the fact that this design ethos was present in both national Conservative and Labour governments and North East Labour councils.37 While skywalks fell out of fashion in the 1980s, the principle of car-people traffic segregation continued into the 1990s and 2000s. The DfT’s 1995 Design of Pedestrian Crossings paper recommended greater use of guard railings and traffic islands to local councils, along with ‘any other means of deterring pedestrians to prevent indiscriminate crossing of the carriageway’.38 Segregated networks affected children in an especially acute way, because they allowed the conversion of streets (places of mixed-use) into roads (used only for the purpose of transport), which impacted those most profoundly who had used the street most often as a destination rather than a thoroughfare. It is a much riskier proposition to play football on a road, or skywalk, than a street.

Figure II. One of Newcastle’s last skywalks curving past Manors car park.39

Throughout the second half of the 20th century children had been losing ground to cars, but at the turn of the millennium there was an effort to reclaim the street as a pedestrian space with the introduction of the ‘Home Zone’ scheme. In part, home zones were based on Dutch ‘Living Street’ schemes, but they were also a resurrection of the 1938 Street Playgrounds Act which had given local authorities the power to close streets ‘to enable them to be used as playgrounds for children’.40 Indeed, playground streets had never technically been abolished, but from the mid-1960s onward they simply started disappearing as councils either removed or stopped enforcing Play Street Orders as cars proliferated.41 The DfT described home zones on their website in 2005 as having ‘children in mind’ and being ‘places for people, not just for traffic’.42 The DfT’s 2001 Home Zone Design Handbook also specifically framed the programme as endeavouring to create ‘streets where children can play safely’.43 Public and local government support for the schemes was strong, so much so that the government launched the £30 million programme whilst the pilot was still ongoing, styling it as a ‘challenge’ where local authorities competed for funding, and ultimately sixty-one projects were undertaken.44 The academic response at the time was unimpressed however, complaining that the schemes were few in number and limited in scope. As Gill argued, ‘few schemes have succeeded in creating spaces between houses that look as if they are genuinely designed for social rather than car use’.45

Ultimately the weight of expert opinion behind policymaking and urban planning in the 2000s had shifted little from the 1980s design principles that had made vehicles a priority and pedestrian protections a lesser one. In comparison to Labour’s investment of £16.2bn over 10-years in new road construction under the 2000 Strategic Road Network Scheme, the one-off £30m Home Zone Scheme was more of a trial rather than a genuine attempt to reconfigure the character of the British road network.46 In its second term Labour invested significantly more into road projects than public transport and in 2002 quietly shelved its target of cutting congestion by 2010.47 Whilst New Labour had promised a move away from Conservative car-oriented transport policy – and did initially make moves to do so – over time its approach ‘reverted to the mean’, largely due to the scale of the task and Blair himself having ‘little interest in transport’.48

The Department of the Environment (DoE) stated in its 1990 This Common Inheritance paper on the future of British land management that ‘The Government welcomes the continuing widening of car ownership as an important aspect of personal freedom and choice’. By doing so they failed to recognise that the freedom for drivers limited the freedom of non-drivers, children especially.49 As Hillman and Adams reported at the publishing of This Common Inheritance in 1990, their data showed that ‘only 9%’ of 7-8 year-old children were allowed to go to school on their own, whereas 19 years earlier in 1971 this figure had been 80%.50 Hillman and Adams lamented these ‘restrictions on independent mobility’, framing the issue around parental restrictions, but this trend was as much a direct consequence of government policy as parenting.51 The DfT’s 1990 Children and Roads: A Safer Way plan concluded with the intention to ‘educate parents so that they more fully understand the risks involved and therefore take responsibility for the safety of their children’, continued the characterisation of the issue of road danger around ignorance.52 Children and Roads notably also involved a plan towards lowering speed limits around schools and residential areas, however in implementation most of the scheme’s efforts were spent on encouraging parents to keep their children off the streets, increasing road safety training in schools, and campaigning against drink-driving. The ‘main elements’ of the scheme, as described by minister Christopher Chope, were ‘a television commercial… designed to bring home to parents and to motorists the scale of the problem and 13 million leaflets for parents and drivers giving advice on what they can do to ensure that children are safe on our roads’.53 A similar stance was adopted towards cyclists who, it was suggested, should wear dayglo vests when riding as ‘conspicuity is vital for any cyclist who is concerned about his or her safety’.54 That roads were for cars first and foremost was an assumption that went practically unquestioned, and as cars were so dangerous to children, children needed to kept away from them.

1.2.2 Places without Play? Making Space for the ‘Normal’ Children

Children’s physical safety was not the only reason successive governments took a restrictive approach regulating childhood mobility. The practice had a history of being partly an attempt to protect children’s moral health too. Early attempts to remove children from the streets in the 1900s were undertaken in the name of what become known as the ‘child saving movement’. The movement, which first emerged in the US, was initially based around the creation of a juvenile court system but also encompassed more general efforts to combat ‘juvenile delinquency’, more so than stopping kids being run under the wheels of motor cars.55 The development of playgrounds as specific off-street play spaces was closely linked with this movement, which whilst having no specific campaign behind it was mostly centred around Britain’s Societies for the Prevention of Cruelty to Children (SPCCs) that was established as a national charity in 1889.56 The very first such playgrounds in the world were opened in Manchester in 1859, and many more opened in the years afterwards as part of the shift pushing British youth towards more guarded forms of play.57 Octavia Hill, one of the three founders of the National Trust and campaigner for the protection of green spaces like Hampstead Heath, pioneered this type of campaigning in her concern for the poor of London. The playgrounds she created, however, did not provide an equitable alternative to street play. They charged a fee for entry, were supervised by adults, were walled off from the rest of the neighbourhood and were not open all the time. It is, perhaps, little wonder then, that they were often vandalised by local children.58 Because the play space provided was of a single, universal type, it excluded all the children who did not fit the normative idea of ‘the child’ who would be using it, most evidently the children whose families could not pay to access it.

As Anthony Platt argues in Child Savers, the child-saving movement was largely led by parents of upper and middle-class background and largely directed at working-class parents’ children. Whilst intentions may have been noble and the movement was a force for good in many young people’s lives, at its core it was an imposition of a method of social control on many working-class people: people who may have gained a play park but had lost the streets to the vehicles of the middle and upper classes.59 In 2009 when Platt revisited his 1977 edition of Child Savers, his main point of revision was to emphasise the ‘staying power’ of the 19th century idea of ‘hard-core biological determinism’ in planning and policymaking expert circles.60 By this he meant that, subconsciously or otherwise, the approach to the management of working-class children’s environments in middle and upper class expert circles in the 21st century still followed a ‘social Darwinist ideology’ that sought to reform children by removing them from the streets which, by being the domain of the working class, would corrupt them.61

The child-saving approach waned in the 1930s and 1940s, but in the 1950s and 1960s it took hold again in a changed form known as ‘child centred’ pedagogy.62 In theory child centredness meant talking to children and basing childcare and education around their needs, but as Tisdall argues in A Progressive Education? the underlying logic of this philosophy was that children were ‘fundamentally separate from adults, distinguished by their developmental immaturity’.63 Everything was to be done for children because children themselves could not be trusted to do things for themselves, in the same vein as the child-saving movement did not trust children to play on the street by themselves. Whilst child-centred pedagogy evolved to be ever more responsive to children throughout this period it never conceded any control to children, only contingent consultation.64 Evidence of this approach in the 1980s can be seen in safety legislation introduced to regulate playground equipment such as the sharpness of edges and the size of gaps between components whilst informal unregulated places of play such as former bombsites and scrublands were increasingly subject to redevelopment, as catalogued by academic works at the time like Robin Moore’s Childhood’s Domain.65 Moore pointed out that the establishment of the Association for Children’s Play and Recreation (ACPR) in 1983, a charity tied to the National Playing Fields Association with the aim of providing play spaces such as adventure playgrounds, was both a response to decreasing outdoor play and a means by which to control it, because recent developments had led Britain to a point where ‘children’s play must be increasingly regarded as a policy imperative’.66

The aim of the ACPR was to provide places for children to play, and this meant getting them off the road. Mirroring closely early 20th-century ideals, increasingly children playing games in the street were seen as a nuisance or menace, as they might get in the way of moving vehicles, or damage a parked one, as evidenced in the slow but steady un-designation of Play Streets across Britain during this period.67 Teenagers especially were the target for accusations of ‘hanging around’ on streets, although they were also unwelcome on playgrounds, hence the propensity of some to find places abandoned or cut-off to be in, where they could ‘look out and not be seen’, as Patsy Owens’ 1988 interviews documented.68 This fear of the child will be explored in the next section of this chapter, but the point here is that experts in policymaking and planning – by encouraging children to keep out of the street – were following a tradition that cast independent outdoor street play as both physically and morally dangerous.

This aspect of the universalised approach to childhood environments is especially pertinent to the context of the North East in the 1980s and onwards, as more and more of the region’s characteristic urban play space – the back-lane – became less attractive or off-limits to children, with relatively little land provided as replacement in the form of playgrounds. This was further explained by parallel developments in 1980s British society which saw an increasing emphasis on the importance of individual identity and agency, causing communal spaces such as parks to fall out of favour with parents as play areas compared to individualised spaces such as private gardens and living rooms.69 The government’s encouragement of the creation and commercialisation of semi-public-semi-private areas such as malls and town centres led to a situation where even semi-public, car-free places were unfriendly to the idea of young people ‘hanging around’, pushing children to the fringes. This created a new role for the police in ‘protecting the interests of private business and regulating the activities of the “non-consumer”’.70 In 1989, Nikolas Rose characterised recent developments as a pervasive underlying ‘process of bureaucratisation’.71 Rob White worried that this situation ‘frequently leads to conflict between the police and teenagers over the use of public spaces’.72 Once again, working-class children, less likely to have access to significant private outdoor space and more likely to be in commercial areas as a ‘non-consumer’, were more often impacted by this change in the policing of environments than their wealthier contemporaries.

Experts in the fields of education and urban planning did not always support this trend, but mostly accepted it as unassailable and instead spent energies on discussing how the ‘playground of tomorrow’ could be made into a safe, fun, and integral part of modern Britain.73 The consequences of focussing on playgrounds over broader play-friendly civic spaces were not lost on designers however, who foresaw that ‘such a setting would not make a good play environment because it would lack many of those elements necessary for meaningful play: variety, complexity, challenge, flexibility, adaptability, etc.’ and that children ‘want to be where it’s at, to see what is going on, to engage with the world beyond’.74 Paul Wilkinson even noted that contemporary playgrounds ‘are not being heavily used because children do not like them; simply put they are neither fun nor challenging. Incidentally, this also gives them the appearance of being safe: few accidents are reported because few children use them’.75 However in the face of a lack of funding and political will, the prospect of changing the entire environment rather than creating better refuges from it – making the world safe for play rather than making a world safe for play – seemed an impossible task and was thus not seriously considered by many.76

However, less constrained by practicalities and more concerned with possibilities, the freedom-and-agency school of academic commentators (prominent voices like Sheridan Bartlett, Ulrich Beck, Louise Chawla, Mayer Hillman, and Robin Moore) took a very different view, even if ultimately – as we shall see – it was the case that the shift away from street play could not be easily reversed. Generally, they argued that it was not the responsibility of individual children and parents to act safely, but the communities they live in to be safe for them. Criticism of the individualist approach to childcare was sharpened by opposition to Thatcherite ideologies, which were associated with social atomisation and marketisation. This critique is commonly remembered through the issue of the free school milk furore, but the 1980 Education Act liberalised the school services more generally, as did the 1986 Social Security Act, 1988 Local Government Act, and 1988 Education Reform Act.77 Although most associated with Thatcher, New Labour governments also adopted the ‘choice agenda’, as evident with the creation of Academies under the Learning and Skills Act 2000. Tony Blair famously sent his own son to a school that had opted out of local government control under Thatcher’s 1988 act, demonstrating his administration’s endorsement of a marketised, individualised approach to childcare.78

The argument that academic proponents of the freedom-and-agency school put up against the prevailing neoliberal perspective was that the personal-choice-and-responsibility approach to childcare was ultimately limiting children’s choices about where they could be. As a first example, Mayer Hillman et al.’s 1990 One False Move took a road safety poster to be exemplary of their issue with the government narrative:

Figure III. A government road safety poster c.1980s/1990s.79

Hillman et al. were concerned that young people’s freedom of mobility was being eroded, finding that whereas in the 1970s ‘nearly all’ British 9-year-olds were allowed to cross the street independently, now in the 1990s only half were.80 They contested the DfT’s claim in Children and Roads as part of the 1990 Safety on the Move campaignthat ‘Over the last quarter of a century, Britain’s roads have become much safer’ and words of the Association of Chief Police Officers which stated that Britain was ‘the safest country… in Europe’ regarding its roads.81 Accidents and deaths may be down, Hillman et al. argued, but this did not mean the roads were safer; they contended the statistics indicated that the roads were considered more dangerous than ever, and thus avoided.82 Exceptionally for the time, One False Move attributed reduced rates of child road accidents to a loss of childhood freedoms, saying that ‘the accident statistics are reconciled by the loss of children’s freedom… it is the response to [the] danger, by both children and their parents, that has contained the road accident death rate’.83

The founding of the Children’s Environments Quarterly journal in 1984 manifested the growth of academic interest in these issues. Based in the US, but with many British and international contributors, the journal was designed to be an interdisciplinary ‘low-cost, highly graphic alternative to more conventional journals, without the detached formality that many were finding troubling in “serious” academic publications’.84 Despite being told the idea was not economically viable by publishers, interest was strong enough to keep the journal running, and it served as a collector of academic work that challenged urban design trends of the day.85 The transatlantic nature of the journal reflected a transatlantic interest in children’s environments, with American academics sharing many of the same concerns as British ones. Indeed, then as now, British academics in this field relied upon and were entangled with work coming out of the US. For example the essay ‘Neighbourhoods and Human Needs’in the opening volume of the journal came from the influential American anthropologist Margaret Mead, but was obviously also applicable to the British context:

When children move into a newly built housing estate that is inadequately protected from automobiles, parents may be so frightened… that they give the children no freedom of movement at all.86

Those writing for the Children’s Environments journal turned the scope against experts in positions of power, questioning their methods and philosophies. Colin Ward’s popular 1978 text on urbanism The Child in the City described the myriad ways ‘a significant proportion of the city’s children have come to be at war with their environment’, and found city planners to hold simplistic notions of children characterized by a concept of a ‘universal child’ which excluded lower-income, non-white, and female childhoods that typically had less access to the cars and technologies that facilitated their vision of late 20th century life.87 Ward’s text would go on to influence many others, including Claire Freeman’s 1995 Planning and Play, which examined British planning literature of the period and lamented the ‘lack of recognition given to children’s needs’ as ‘clearly evident in the almost total omission of any discussion of children in mainstream planning literature’.88 In a later study (2005), Freeman questioned urban planners on their methods and found that children were considered only in the planning of ‘recreation spaces’, and ignored in the planning of streets, houses, shops, leisure facilities, and infrastructure.89 This is demonstrative of the fact that whilst many scholars had been denouncing planning methods for the past 3 decades, little had changed in response to their calls for action.

Whilst the general argumentative thrust of the freedom-and-agency school of academic work did not much change across these decades, the methods of argument did. In the 1970s, academic literature of this type tended to focus specifically on the benefits the natural world had on children with psychological differences, rather than children more generally. Kaplan’s 1977 Patterns of Environmental Preference found that suburban-child participants with diagnosed Attention Deficit Disorder (ADD) reported beneficial outcomes on their mental health up to several years after being sent on an extended nature-camp expedition.90 Similarly Behar and Stevens’ 1978 Wilderness Camping placed American city children with Attention Deficit Hyperactivity Disorder (ADHD) on a ‘residential treatment programme’ centred around outdoor activities, and found that the majority of their subjects demonstrated ‘improved interpersonal skills and school performance’ after the activity.91 During the 1980s and 1990s, this approach began to change so that it was most common for studies to consider children in general as being under threat from reduced access to outdoor space, particularly natural outdoor space, such as with Boyden and Holden’s 1991 Children of the Cities.92 Media in both Britain and the US picked up on this transatlantic concern during the 1980s and 1990s and indeed was ahead of experts in expressing alarm about the role new technologies were playing in children’s lives – as will be explored in Chapter Two. A 1997 article in Time claimed that a chronic lack of play and physical touch during childhood due to too much time spent indoors could result in developing a brain ‘20 percent to 30 percent smaller than normal’, which whilst being wrong, demonstrates the acute fears of the period, and that academics were far from alone in their concerns.93

Judy Wajcman’s 1991 Feminism Confronts Technology is exemplary of the parallel growing academic interest in past struggles over urban environments. Wajcman used an assessment of the ‘play streets’ movement of the 20th century as a lens through which to view contemporary debates over similar issues, the movement being an example of working-class people, predominantly women, creating an alternative vision of how childhood environments could be managed. Beginning in the 1930s, Play Streets sought to stop the frequency with which middle-class drivers, or delivery drivers working for business-class bosses, were running over working-class children.94 Wacjman argued that increased traffic was a major factor in the decline of working-class street sociability because adults were no longer required to be out on the streets to watch over their and others’ children.95 As formerly unassuming activities such as playing football or tag became acts of delinquency or hooliganism, working-class people – women and children in particular – were ‘literally left stranded in… cities designed around the motor car’.96 Katrina Navickas describes this as a lost form of ‘commoning’ (a process that generates relationships) in a forthcoming book.97 Wacjman framed play streets as a form of counter-cultural resistance, one that ‘started from the assumption that city children had the right to play in the streets where they lived, and that cars, not children, were the main problem’.98 Indeed, it was common for academics to invoke a form of ‘nostalgic progressivism’ that used memories of the past to argue for a radical shift in policy. Conversely, government experts offered a kind of ‘futurist conservatism’.

Although practical experts and theoretical and historical experts had their differences over urban design, both tended to overlook dangers that children faced in environments aside from the street. For example, the AA motoring trust’s 2003 report Accidents and Children found that the deaths of children as passengers in cars was considerable and overlooked; for young children in particular the risk of being killed in a car was greater than for being hit by one.99 There was no wide debate about whether children should or should not be driven. The National Children’s Bureau also found that in the 2000s three times as many children were taken to hospital each year for falling out of bed than from falling out of trees.100 The National Trust’s 2012 Natural Childhood report found that during the 2000s one million children aged 14 or under went into A&E departments from home injuries: ‘30,000 with symptoms of poisoning, mostly from domestic cleaning products, and 50,000 with burns or scalds’.101 Additionally, it found that 500,000 infants and toddlers each year were injured in the home, 35,000 from falling down stairs, and that almost half of all fatal accidents to children were caused by house fires.102 In terms of sheer numbers, this meant that the home for a child was by far the most dangerous place to be. While cars had made the streets unsafe, the home was not the haven it was perceived to be, and the dangers it posed were, in general, far more serious than the injuries a child could sustain from outdoor play. On a much bigger scale, in relative terms the dangers of cars, strangers, and natural spaces were nowhere near as important in determining children’s lifespans as those of poverty and inequality between children.103 All this to say that the danger of the outdoors was real, but it was also specifically focussed on as a danger to children in a way that the dangers of being in the home or being a car passenger were not.

The debate between the health-and-safety approach of policymakers and planners and the freedom-and-agency approach of many academics defined much of how the physical environments that children inhabited came to change and be understood across these decades, especially in response to the rapid growth in car ownership and campaigns to take children off the streets. This debate was not an equitable one, however. The work of Chawla and others did not materialise in any extensive physical changes to the landscape; New Labour’s experimental and limited home zone scheme being the largest attempt to rebalance streetscapes.104The process of further restrictions being placed on children’s mobility continued, with the burden falling especially on those that did not fit the mould of the universal child. Thus a health-and-safety approach brought reductions in the spaces available to children who could not easily access a park, garden, sports centre, National Trust property, or some other outdoor space.

1.3 Safeguarding, Crime, and Children: Challenging Traditional Expertise

Girls in particular were said to be threatened by one of the most enduring dangers of late-20th and early 21st Britain; not the motor car but ‘The Stranger’, an ideathat captured the public imagination. Promoted by parents, newspapers, charities, and indeed experts in government, a national ‘stranger-danger’ discourse arose which asked: ‘what can be done to protect our children?’. This section will explore the impact of this popular and media discourse on experts, and how – once again – policymakers and academics differed in their response to the rise of this new threat, whilst also grappling with a movement that challenged their traditional authority. I will also explain how expert discourse that conceived of child safeguarding as a societal issue rather than individual one both clashed with the dominant individualist culture of the period and perpetuated solutions based on the idea of a universal child. Safeguarding solutions, whilst partially valid, inevitably led to the undervaluing of issues with children who did not fit the normative model.

The strangerprovoked a response in the public that the motor car did not, even though the latter was evidently more deadly. Why? First, strangers posed an intentional threat rather than accidental, making the danger more malicious. Second, the inherent humanness of stranger-danger made it feel more personal and immediately understandable as compared to the more complex system of factors that constituted car danger. Finally, the unknowability of the strangergave the idea power. Its theoretical, semi-fictional quality gave it an air of mystery so often used in fiction to create atmospheres of anxiety, suspense, or horror – feeding people’s fears of a threat that they knew was out there but could not see. Indeed, whilst abductions, abuses, and murders by strangers did pose a threat to children, the specific idea of the stranger that emerged during this period arose largely on the back of what Jennifer Crane calls a ‘sensationalist’ media narrative that began with the Moors murders in 1966 and entered its heyday in the 1980s and 1990s.105 The construction of the idea of strangers during this period – which contributed to parents being more restrictive over their children’s mobility – overstated the dangers and underplayed the benefits of allowing children independent outdoor play.106 Additionally, particularly following James Bulger’s murder in 1993, a second construction arose in public, media, and expert discourse which argued children themselves (teenagers especially) were something to be fearful of. Like the idea of ‘The Stranger’, the idea of ‘The Youth’was representative of a broad fear: that the younger generation had lost discipline, leading them to become antisocial and dangerous menaces to society. As with cars, experts were divided into two camps on the issue. In general government experts of the health-and-safety school endorsed stricter control of children’s independent mobility to protect them from strangers and to protect strangers from them. Meanwhile, academics of the freedom-and-agency school supported less control over children’s mobility. For example, in 1992 historian Philip Jenkins called the recent focus on stranger-danger a baseless effort to ‘induce fear and moral panic’ from politicians and the press.107

The stranger narrative of the 1980s had its roots in the high-profile reporting of child abuse (also called child maltreatment) cases from 1960s onwards, most notably the 1966 Moors murders of five children. The fact that one of those convicted in that case, Myra Hindley, was a woman gave it particular traction in the press, with Hindley earning the tagline ‘the most evil woman in Britain’.108 Later investigations into possible other victims and Myra’s repeated appeals for release from prison in subsequent years kept the case alive in public consciousness. The nature of the media coverage into the Moors murders and subsequent similar cases was twofold. First, its aim was to covey the horror of the story to the public and condemn the criminals, but simultaneously it was to critique the organisations which had failed to prevent the crimes occurring. Indeed, traditional experts such as social workers, police, doctors, psychiatrists, government officials, and teachers were often heavily criticised in the press for their failures in cases of child abuse and murder, particularly if the perpetrator was related to the child.109

In the infamous 1973 case of Maria Colwell, where the seven-year-old was abused and murdered by her stepfather, much of the reporting, and the ‘primary focus’ of the subsequent expert-led public inquiry, was into the failings leading up to Maria’s death of institutions such as social services, the NSPCC, and the health service.110 Criticisms centred on poor communication between services, a general lack of competence, and institutional intransigence. Maria Colwell was by no means a one-off; the media responded analogously throughout the 1970s, 1980s, and 1990s to similar cases including a spate of three in 1984: the murders of Jasmine Beckford, Tyra Henry, and Heidi Koseda, all young girls killed by their father or stepfather. Black children and girls especially were at disproportionate risk of such a danger, including Jasmine, Tyra, and, 16 years later, Victoria Climbié, whose death kickstarted several pieces of child-protection legislation under Tony Blair. In the report into the case of Tyra Henry, white social workers were found to have failed to intervene despite being aware of domestic abuse because they ‘lacked the confidence to challenge the family because they were black’.111 Once again, the experts had failed, and media coverage encouraged the public to take notice.112 Stephen Bubb, the leader of Lambeth Council where Tyra had lived, called for an end to a ‘trial in the press of the social workers’.113 Margaret Thatcher’s government responded to the 1984 cases with new guidelines for social workers on how to handle child abuse cases and by passing the 1984 Child Abduction Act, which more explicitly recognised the rights of the child than the old 1861 Offences Against a Person Act, created separate categories of crime for ‘Abduction by a Parent’ and ‘Abduction by Other Persons’.114

In the Noth East the Cleveland scandal of 1987/1988, which a local MP called ‘the greatest child abuse crisis that Britian has ever faced’ also spawned a national media storm surrounding social workers.115 121 children were taken away from their parents in the borough under accusations of sexual abuse, only for 94 to be returned after being determined to have been ‘incorrectly diagnosed’.116 Some scholars have since argued that many of the original diagnoses were in fact correct, and that government officials suppressed evidence supporting the diagnoses because acknowledging the scale of abuse would have required significant new resources.117 Either way at the time and in popular memory the Cleveland case became a totemic example of expert overreach, and is popularly credited with part-inspiring the 1989 Children Act,which shifted the focus of responsibility for children away from the state and towards individual families.118

By the mid-to-late 1980s, following two decades of increasingly publicised cases of child abuse, the issue of sexual abuse in particular entered expert discourse as a significant political talking point and agenda. Moreover, the growing recognition of the idea of ‘Battered Child Syndrome’ amongst medical professionals after 1962, following an article of the same name in the Journal of the American Medical Association, gave legitimacy among experts to the problems of child protection and abuse.119 The overall conception of abuse was moving away from being understood as a medico-social problem of mental or physical health and toward a socio-legal problem with multitudinous societal influences and effects. This, together with the increased interest from the public and media in cases of child abuse, represented an experiential and emotional turn in the study of child abuse; that being a greater value placed on ‘normal’ people’s views over the views of experts. The Childline charity, established in 1986, was founded on this principle. Esther Rantzen, Childline’s founder, described the organisation’s concept of child abuse as ‘incorporating sexual abuse, but moving beyond it to encompass physical and emotional abuse, and neglect’, something she criticised experts of the period for ignoring.120 Thus, experts found themselves asking: how are we to address this ‘new’ Battered Child condition, for which our traditional methods are inadequate?

The direction of travel of expert analysis on this issue from the 1970s through into the 1990s involved turning away from thinking about child protection in the (now old-fashioned) paternalistic sense, wherein those in authority assumed they knew what was best for children. Instead, Tisdall explains, it became accepted amongst theorists that parents or even children themselves could be treated as sources of expertise on such issues, and this widened the discursive space surrounding what forces in society contributed to environments in which cases of abuse occurred, and who was best-placed to understand those forces.121 When child abuse had been seen as primarily a medical issue in the first half of the 20th century, assessment of it had been taken at the level of the individual, asking: ‘what is wrong with this person?’, when considering either the abused or the abusers. Now child abuse was understood as a social issue with medical consequences, the question had become ‘what is wrong with society?’. The older individualistic medical approach was deeply flawed in its inability to address patterns and trends of abuse, but the social approach also had its problems. As Crane argues, the impact of this new expert discourse was to bring about the emergence of ‘a universalist model of childhood vulnerability, characterised around an ageless, classless, genderless “child”’.122

This was connected to the ‘child-centred’ pedagogy of the era which, as discussed in the Urban Landscapes section, was an approach to teaching and parenting that ostensibly put children at the centre of its philosophy, but was mostly interested in fitting ‘incomplete and incapable’ young people into a particular universal societal mould.123 Child-centredness influenced many aspects of children’s lives from the way school buildings were designed to the way social services operated, because, as Roy Kozlovsky explains, the idea of catering to ‘child’ as supposed to ‘children’ led to certain groups being excluded from supposedly inclusive environments.124 For children with disabilities, for example, the poor accessibility of school buildings built during the 1970s and 1980s mirrored the ill-provision of their education, which by treating all children as one actually furthered certain inequalities between them.125 Furthermore, because the child-centred approach to child protection was far more attentive to identifying and addressing threats to children from outside the universal model rather than inside, it disproportionately focussed on the dangers of strangers. People known to children – parents, teachers, and peers – were inside the model, and so even though the majority of child maltreatment cases (sexual abuse cases especially) were and are perpetrated by people already known to the child, considerably less emphasis was placed on the dangers those ‘internal’ threats posed.126 Waters highlights how this institutional suspicion of the unfamiliar also fed into other societal prejudices, notably that of race.127

Policymakers under Thatcher governments endorsed this universalised ideal of childhood as evidenced in the acts they introduced to centralise control over children’s lives at home and school. There were obvious efforts such as the imposed ‘prohibition on promoting homosexuality’ placed on schools and local authorities under the Local Government Act 1988, but there were also a number of acts that enforced family uniformity by more indirect means. To the Thatcher administrations of the 1980s, home and family meant – even symbolised – safety and normality, and the way they approached the legislation of childhood reflected that. The 1980 Child Care Act,for example, focussed on keeping families together by encouraging councils to work with private organisations to ‘diminish the need to receive children into or keep them in care… or to bring children before a juvenile court’.128 John Major’s government continued this approach with the 1991 Child Support Act,and consequent establishment of the Child Support Agency in 1993, which required the tracking down of ‘absent’ parents (fathers primarily) to get them to pay child support instead of the government; the intention thereby being to make sure parents met their legal obligations and discourage family breakups.129

The 1989 Children Act is the crucial piece of legislation to examine on this issue, as it introduced the most significant changes to encourage the Conservative model of family life. Somewhat following ideas of the new ‘child-centred’ approach to childcare the Act specified that local authorities should give ‘due consideration’ to children’s wishes about where they wanted to live, but that ultimately parents had total authority on the matter:

‘Any person who has parental responsibility for a child may at any time remove the child from accommodation provided by or on behalf of the local authority’

– Children Act 1989, Part 3, Section 20 (8).130

The law stipulated that only unmarried fathers could lose parental responsibility (PR); mothers and married fathers could only ever have PR restricted in rare and severe cases.131 This, along with the rule that unmarried fathers did not automatically have PR, meant the act tacitly endorsed a ‘traditional’ nuclear-family structure.132 Even in cases where it was deemed that a child should be taken away from their parents, the act still required that they be housed as close to their parents’ home as possible, and that they keep the family name.133 Furthermore, in part 5 (‘The Protection of Children’), the act firmly established child abuse as a legal issue first and social/medical issue second, which – after implementation – led to a greater reliance on hard to gather forensic evidence to convict in such cases, leaving children stuck in ‘forensic limbo’ as cases drew out longer, and fewer were processed overall.134

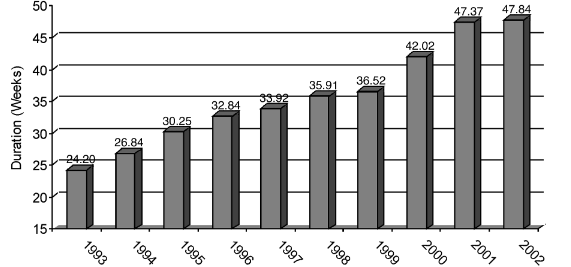

Figure IV. ‘Estimate average duration of care proceedings across all courts’.135

The focus of the Children Act was thus on addressing exceptionally horrific newsworthy individual cases of child abuse as opposed to the broader pervasive issue of child abuse, an approach which was supported by the press’ own fascination with such cases. This type of legislation which assumed a singular preferred family model lost sight of the specificity by which any one child’s life differs from another’s under the same societal forces, altered by crucial variables including race, class, gender, and environment. The Children Act’s insistence on the home and family being thepreferred safe space for children, for example, and that authorities should only intervene if a child is ‘beyond parental control’, failed to consider the increased risk of sexual abuse that girls faced in the home, particularly from father-figures, and the protection that being outdoors with other children could offer from such threats.136

To say that the child-protection agenda of experts in government and social services during the 1980s and 1990s was totally based around efforts to encourage ‘family values’ however, would be untrue. As part of the experiential and emotional turn of the era and the Thatcherite distrust of the civil service, policymakers were also keen to consult about new approaches to the management of the systems of child protection with non-traditional sources such as feminist critics, charities, and public campaign groups who ‘spoke for children’.137 This led to a considerable degree of independence being given to small-scale voluntary-sector groups – at the expense of government and social services – to run often quite radical programmes of education and activity.138 For example, in 1986 the Central Office of Information (COI) hired one such small charity, Kidscape, to create official child-safety public information films (PIFs) on their behalf, because they had assessed their own Charley PIFs to have been ineffective.139 More broadly, this approach devolved the responsibility for child-support programmes to local authorities and charities, which allowed certain groups to pursue new approaches to child protection, but it also meant less regulation and uniformity in the support available to children. The largest of these groups founded in the 1980s and 1990s, such as Kidscape, Kids Company, Childline, Children in Need, KidsOut, and the WAVE Trust were based in London, and as such their services were harder to learn about and access for children in the North East.140 Groups in the North East, like the Gateshead Young Women’s Outreach Project (GYWOP), which drew on the experiential and emotional expertise of the children they worked with to discuss issues of contraception and sexual abuse, were smaller and had less influence over experts in government.141

Figure V. Scene from stranger-danger film ‘Adult & Child’ (1994).142

The growing significance of charities marked an important shift in the government’s concept about who was considered an authority on issues of child protection, as now parents and even children themselves were being consulted as experts, not directly by policymakers, but by the private organisations they worked with. This approach complemented Thatcher’s distrust of the public sector and drive to focus on new alternative sources of expertise such as those in private charities.143 ChildLine is one prominent example, the charity coming out of the success of the BBC show Childwatch at the insistence of its producer Esther Rantzen.144 Many traditional experts such as those at the NSPCC and National Children’s Home (NCH), whilst supportive of the effort, expressed doubts as to its longevity because it was run by journalists and inexperienced volunteers, but it proved extremely popular.145 In a retrospective seminar in 2016, the MP Shaun Woodward said of Childline:

Thirty years ago we didn’t talk about child abuse. Child abuse was something that most people thought happened in extreme cases in places that had nothing to do with them… What Esther brought to it was her journalism and what she found was that there were these kids who for whatever reason weren’t being picked up by the NSPCC, weren’t being picked up by the statutory services.146

Interestingly though, whilst policymakers in the 1970s and 1980s were often keen to consider popular sentiment and consult with non-traditional experts, academics were not so quick.147 The trust in experiential and emotional expertise that organisations like Childline represented, in some respects undermined the value of traditional experts, challenging their authority. Until the 1990s the academic response to the topic of stranger-danger and child protection was notably muted, especially when compared to the literature about the threats that urbanism posed to childhood, for example. Why was this? Experts often failed to grapple effectively with these emotionally charged public debates because they were unfamiliar with them. Debating the dangers of cars and urbanism was known territory for many, as this involved relatively formalised and detached discussions within expert circles. In the realm of strangers, however, the prevalent discourse was non-traditional: it was passionate, experiential, and led by journalists and public campaign groups who were often distrustful of established sources of authority. For example, Colin Ward’s The Child in the City (1978), Robin Moore’s Childhood’s Domain (1986), and Neil Postman’s The Disappearance of Childhood (1992) were three influential texts from across the era which lamented the loss of childhood freedoms – and have been subsequently frequently referenced in academic work – but did not address the topic of strangers or child abuse.148

The arguments these academics made about ‘lost childhoods’ may be seen as largely legitimate and, indeed, the work of this thesis supports many of their ideas, but because they were written in response to what they saw as an emerging threat to childhood liberties, they did not engage with the stranger-danger narrative as they did the urbanism narrative. A scepticism about the protection debate led those scholars who did write about child murder cases to talk less about issues of child protection, and more about policy responses to issues of child protection. For example, Nigel Parton’s 1986 analysis of the official report into Jasmine Beckford’s murder found its conclusions to be ‘very much open to doubt’ and ‘misdirecting our attentions from the major issues’ because of its tight focus only on issues within the Beckford family, classifying them as the problem, and not thinking more broadly about societal forces acting upon the family.149 Reading the report, its focus on ‘high risk’ cases does distract it from addressing how child abuse could be better prevented generally, but similarly Parton’s critique can also be judged as paying too little attention to the importance of these high-profile cases. Much academic discourse in the field was preoccupied with structural analyses, and critiqued ‘experientialist’ approaches taken by policymakers in conjunction with the media as being too often anecdotal, sensationalist, and lacking a serious methodology. However, the value in ‘experientialism’ came to be more recognised in the 2000s.

An emphasis on experience and emotion initially acted to exclude traditional experts but by the mid-1990s more and more academic work started to address the stranger-issue and, indeed, to engage with experiential and emotional sources of expertise.150 In an article for Children’s Environments in 1994, one of the journal’s first to assess the topic of strangers, the researchers interviewed parents about their fears for their children and found that ‘for most parents the fear of random physical assault by a stranger superseded all other fears of violation or harm’.151 The researchers also concluded that ‘Parents commonly fail to recognize that children’s safety is an illusion’- meaning that danger was an inherent and in some ways essential part of childhood, and this quote is exemplary of the broader academic approach to the stranger.152 Whilst not dismissive of public and media concerns about strangers, many academic contributors argued the issue had been overblown by newspapers and that the measures parents and policy-makers were taking to combat stranger-danger were disproportionately restrictive. Pain’s ‘Paranoid Parenting?’(2006) described ‘risk-averse’ parents as ‘cosseting their children indoors’.153 Katz in Power, Space and Terror (2006) made the point that street crime had been falling since the 1970s and 1980s, meaning that – in terms of crime – parents generally played in more dangerous streets than those they denied their children.154 Handy et al.’s 2008 ‘Neighbourhood Design and Children’s Outdoor Play’ similarly emphasised that it was the ‘parental perception of neighbourhood safety’, rather than actual safety, that was the significant restrictor of child mobility.155

This tendency toward disagreement in expert discourse over child protection between academics and policymakers was expedited by the rise of a new fear following the murder of James Bulger in 1993. James Bulger’s case was as widely publicised as those of Maria Colwell or Jasmine Beckford, but what made it particularly notable was that the killers were children themselves. Two ten-year-old boys who led James away from his mother in a busy shopping centre and were caught on CCTV doing so. The evocative image of the toddler been led away spread widely, and the event sparked much discourse surrounding ‘the state of the youth’ in modern Britain.156 In the North East, response to the Bulger case was shaped by the memory of 11 year old Mary Bell, who in 1968 had murdered two young boys by strangulation on Tyneside, and loomed large in popular memory. This was so much the case that soon after the story of James Bulger broke, reporters tracked down the now 41-year-old Bell, who had assumed a new identity, and consequently was forced to do so again after members of the public caught wind of her address and threatened assault.157

The media and public concern that arose following the Bulger case in particular led to a notable change in policy approach from the government, with both John Major and Tony Blair promising to ‘crack down’ on child-crime. In the 1980s the Thatcher governments had been comparatively lenient towards youth crime with the 1982 Criminal Justice Act significantly reducing the imprisonment of under 21s and limiting the use and length of custody in young offender institutions, subsequently leading to reduced crime rates and prison populations for young people.158 In the 1990s however Major and Blair’s governments took a much harder – and electorally popular – stance toward youth crime. Major’s notorious 1993 ‘Back to Basics’ speech summed up the approach succinctly in the insistence that society should ‘condemn a little more and understand a little less’.159 One month later the home secretary Michael Howard increased the maximum sentence of detention for 15-17-year-olds and the 1993 Criminal Justice Act introduced Secure Training Centres (STCs), privately-run facilities in the style of US ‘boot camps’.160 Despite Blair calling the proposal a ‘sham’ in 1994, Labour would go on to further invest in the programme once in power.161 On the announcement, Howard stated that child offenders ‘are adult in everything except years’ and between 1993 and 1998 the number of imprisoned teenagers doubled.162 Similarly, the 1994 Criminal Justice and Public Order Act and 1997 Confiscation of Alcohol Act gave the police greater powers to break-up and move along groups of young people on the street.163

Under Tony Blair’s premiership this basic approach changed little, first introducing the 1998 Crime and Disorder Act which abolished the principle that children under 7 were ‘doli incapax’ (incapable of committing a crime) and creating a system of Anti-Social Behaviour Orders (ASBOs), which could be used in any event where a child behaved ‘in a manner that caused or was likely to cause harassment, alarm or distress to one or more persons’.164 Under the same legislation ‘parenting orders’ were introduced, which legally required parents of children with ASBOs to impose curfews and attend parenting classes.165 The 2003 Anti-Social Behaviour Act strengthened the ASBO system, giving police the power to disperse groups of 2 or more children in any public place if their presence ‘has resulted, or is likely to result, in any members of the public being intimidated, harassed, alarmed or distressed’.166 This was strong policy that matched Blair’s ‘tough on crime, tough on the causes of crime’ slogan, paired with an emphasis on personal responsibility.

This philosophy enabled an approach towards crime that not only focussed more on punishment of individuals but, particularly in the case of children, distanced government from responsibility towards them. Major and Blair’s more punitive policy platforms increased the level of state intervention but decreased the level of care the state was expected to provide or take responsibility for. For example, the 2007 paper The Children’s Plan: Building Brighter Futures placed emphasis on children specifically as being outside of the government’s remit. In language reminiscent of the Thatcher administrations, the stated first principle of the paper was that ‘Government does not bring up children – parents do’.167 What differentiated Blairite youth-justice from Thatcherite youth justice however, and what meant Blair brought far more children into contact with the youth justice system, was the belief that young people had become dangers to society, not only that society was a danger to them. This can be seen in how the term ‘child’ was often withheld from child-criminals, instead being referred to as youths, yobs, teens, young offenders, delinquents, or any number of other terms. Emblematic of this was the Secretary of State for Justice Jack Straw’s comments in 2008, when questioned on Britain having ‘more young people in custody than any other comparable country in Europe’, that:

Most young people who are put into custody are aged 16 and 17 – they are not children; they are often large, unpleasant thugs, and they are frightening to the public.168

This categorisation of young offenders as ‘not children’ is indicative of the perception held of them in policymaking circles during the 1990s and 2000s, and of the STC and ASBO systems set up to deal with them. In some respects, this child-danger discourse was a response to the 1980s stranger-danger narrative construction of the child as being pure and powerless.169 Children are not so innocent, the argument went, they are not always the ones to be fearful for, but to be fearful of. The Bulger case was only an extreme symptom of a wider problem. This was not only the view in expert circles, and indeed the strong public and media response to the Bulger case galvanised government action (this will be explored further in Chapter Two). To give an understanding of the nature of the response, the Daily Star’s headline after Bulger’s killers were convicted read: ‘How do you feel now, you little bastards?’.170

1.4 Technology: Exploring an Unknown Environment

The role that technologies played in the trend toward indoor childhoods from the 1980s onwards was different to that of urban landscapes or strangers. Cars and ‘creeps’ kept children inside through fear, creating an atmosphere where many parents decided it was too dangerous to let their children out of sight. Technology, on the other hand, was something about the indoors that was attractive to children, something to make them choose it over outside play, or indeed just something to do when they weren’t allowed out.

By 1980 97% of UK households had a TV and after the first home computers launched in 1977 they too became commonplace, first in schools during the 1980s, and then in homes during the 1990s and 2000s.171 The children of this generation were thus the first to grow up with these technologies meaning that, as Peter Buchner observed in 1995, many parents’ frames of reference for childhood had become ‘invalidated’.172 The World Wide Web launched in Britain in 1991 and as household adoption grew (from 9% in 1998 to 73% in 2010) the ‘digital world’ grew as an entirely new environment of childhood that young people often understood better than adults.173 This was not the case for all children however. Just as the dangers of cars or strangers disproportionately affected BAME kids, working-class kids, and girls, access to many of the benefits of technology was more difficult for them.

As Helsper and Livingstone contend, whilst most children had access to new technology, disparity lay in the fact that the white children, middle-class children, and boys were given a better-quality technical education, allowing them the skills to make the most of technological opportunity.174 The GCSE for Information Technology (started in 1986) is a good example of this, as it had consistently low take-up rates with female, black, and Free School Meal students throughout the 1990s and 2000s.175 This meant, I argue, that the pervasive concept of the universal child that had for so long gone unnoticed in expert circles started to adopt a new characteristic – that the universal child was technologically literate. Digital devices, conceived of as universal tools, fell prey to the same notions of the universal child which meant little provision was given for accommodating differences.

Technology was not seen only as a source of opportunity however, as during the 1980s and onwards media and public fears grew significantly over the negative impacts that TVs, games consoles, and computers might have on childhood. Technology’s effect on addiction, obesity, anxiety, bullying, social exclusion, and antisocial attitudes were all talking points. Perhaps the ‘white heat of technology’ was too hot for kids to handle? Different to fears over cars and strangers though, this fear emphasised the dangers of the indoors over the outdoors. The theme of the BBC show Why Don’t You? (which ran from 1973 and 1995) asked why children did not ‘just switch off your television set and go do something less boring instead’.176 The irony that this was a tv programme is self-evident, and not lost on children at the time,177 but the greater irony is that after 1988 the show mostly dropped its central message to morph into a more standard drama, resulting in a threefold increase in viewing numbers from 0.9 to 3 million per series.178 Public and media concern (which will be examined in greater detail in Chapter Two) over the issue of ‘square-eyed’ children was common, but these concerns ultimately had little impact on the technologisation of youth, and the fact that parents were choosing to keep their kids at home more than ever in the face of cars and strangers only accelerated the process.

Expert discourse, both in academia and government, took a different path to the popular. As part of efforts to expedite Britain’s continuing post-war transition away from a manufacturing to a services economy, policymakers under Thatcher, Major, and Blair encouraged a general development toward, adoption of, and education in new technologies.179 Indeed, the computer was at the heart of this effort, and the perception that children needed education in digital literacy was strong, with Thatcher’s government promising to ‘put a microcomputer into every secondary school in the country by the end of 1982’ because, in her own words:

We must remember that today’s school children will still be working in the year 2030… My generation has perhaps been too cautious about accepting new technology in micros. Younger people are quick to use new things and have an aptitude for them… familiarity with keyboards and TV screens will help them to take in their stride the new technologies on the shop floor, in the office and in the home.180

Similarly, Blair’s commitment to ‘education, education, education’ in large part was underpinned by an understanding that ‘the age of achievement will be built on new technology’, and a promise to connect all schools, colleges, and universities to the ‘information superhighway’.181 In regard to TV, Thatcher described it as ‘one of the great growth industries, creating jobs, entertainment, inspiration and interests’.182 It is not unsurprising, then, that whilst in government neither took steps to significantly restrict or regulate children’s TV or other media. The closest thing to this was the founding of Ofcom in 2003 under the Communications Act which formalised the requirement to consider ‘the vulnerability of children’ when deciding the media they could be shown.183 Ofcom’s establishment did not respond to contemporary fears over the dominance of TV in children’s lives however, its prerogative being to strictly concern itself with the content of children’s media, as it was founded off the back of public fears over children’s exposure to violence and pornography.184 In other words, regulators were not concerned about how much TV children were watching, only that they weren’t watching ‘the wrong things’.

During the 1990s and 2000s, in response to reductions in unstructured outdoor play time many more families started to send their children to pre-booked sessions for sports, hobbies, and lessons, which often came with a price tag.185 This commercialisation of play meant that opportunities for free play, in both senses of the word, were further reduced and considered lower status than those with an associated cost, as the price connoted quality.186 This disadvantaged families without the money or time to take their children to such sessions, pushing them by necessity towards indoor play and technologies like the TV. A 2001 study for British Telecom (BT) which found that working-class children were significantly more likely to have a TV, games console, or video recorder in their room than middle-class children was indicative of this fact and showed how the use of technology was linked to a lack of access to outdoor environments.187 It was also the case that middle-class parents were generally more receptive to arguments about the dangers of the TV than both working and upper-class parents, and therefore stricter over their children’s access to it.188